AI Agency Near Me: Local Criteria and Pitfalls

Searching for **"AI agency near me"** is a logical reflex. When data, processes, and teams are at stake, proximity feels reassuring: on-site meetings, workshops, responsiveness, context understanding.

Searching for **"AI agency near me"** is a logical reflex. When data, processes, and teams are at stake, proximity feels reassuring: on-site meetings, workshops, responsiveness, context understanding.

Searching for "AI agency near me" is a logical reflex. When the stakes involve your data, your processes, and your teams, proximity feels reassuring: on-site meetings, workshops, responsiveness, understanding of the context.

The problem is that geographical proximity is a useful criterion, but rarely a sufficient one. Some "local" agencies outsource far away, others know how to pitch a demo well but fail to integrate AI into your tools, and many sell "generic" AI without an ROI framework, security, or adoption strategies.

This guide gives you concrete local criteria (the ones that really make a difference), the frequent pitfalls linked to the "near me" search, and a simple method to select a reliable partner for an SMB or scale-up.

Behind "near me", we almost always find one of these needs:

Accelerating scoping (you want a contact who comes in, observes, decides, and clarifies a backlog).

Reducing risk (GDPR, trade secrets, production errors, security).

Avoiding "demo theater" (you want integrated results, not a POC that impresses but serves no purpose).

Onboarding teams (training, change management, usage rules, adoption).

The good news: these objectives are achievable. The nuance: they do not depend solely on distance, but above all on execution capacity and governance.

A nearby agency can help you save time on the most underestimated part: observing real work.

To verify concretely:

Do they offer on-site (or hybrid) workshops with your business and IT teams?

Do they know how to produce actionable deliverables at the end of these workshops (use case registry, data mapping, KPI hypotheses)?

Does the workshop lead to a decision (go/no-go, instrumented pilot, 30-90 day roadmap)?

An agency can be "near you" and stay at the slide deck level. The quality index is the degree of concreteness from the very first week.

In France and the EU, compliance is rarely "optional" as soon as you handle client data, HR, finances, or sensitive information.

Points to test:

GDPR: DPA (Data Processing Agreement), subcontractors, hosting locations, retention, minimization. The CNIL is a good reference point for principles.

AI Act: depending on use cases, obligations are arriving or tightening (governance, transparency, risk management). Reference: official text and EU tracking.

Reversibility clauses: if you change suppliers (model, infra, provider), can you continue without rebuilding everything?

The real local criterion is not "they know the laws", but "they know how to industrialize within these constraints".

A good local agency often has an ecosystem that can reduce friction: hosts, cybersecurity, legal, integrators, cloud partners, data profiles.

To ask:

Which partners intervene, in which cases, with what responsibilities?

Who holds the contract and who is responsible in case of an incident?

If the network serves to clarify and secure delivery, it's a plus. If it serves to dilute responsibilities, it's a risk.

When AI is in production, there are concrete issues: erroneous responses, drift, API cost spikes, prompt injection, unforeseen data in a flow.

Local presence can help, but the real question is: do they have a "run" discipline?

To verify:

Is there an operations plan (monitoring, logs, alerting, procedures)?

Who does what (agency, your IT, your business team)?

Do the deliverables include documentation, tests, guardrails?

To frame their maturity, you can also ask them how they draw inspiration from recognized frameworks like the NIST AI RMF (AI Risk Management Framework).

In certain sectors, geographical proximity can give a real advantage: business vocabulary, customer habits, organizational constraints, seasonality, local players.

However, be careful: knowing your sector does not replace the ability to produce a reliable system.

A local agency can be very junior on AI in production, or on IS integration. Conversely, a partially remote team can be excellent if they deliver fast, document, test, and know how to integrate.

Indicators of seniority: quality of questions asked, ability to refuse a vague scope, presence of a measurement plan, existence of guardrails.

Typical red flags:

Promise "we'll put an agent everywhere" without talking about data, integrations, or KPIs.

Demo of a generic chatbot without connectors, without verifiable sources, without handoff.

Discourse solely focused on the model (GPT, Claude, Mistral) instead of the workflow.

Useful AI in a company is almost always connected AI: to your CRM, your helpdesk, your documents, your processes, your rules.

Some structures are "near you" commercially, but production is scattered without visibility.

Simple questions to ask:

Who actually develops? Employees, freelancers, subcontractors?

Where are they located?

Who has access to your data and environments?

What is the plan if a key person disappears?

It is not outsourcing itself that is the problem, it is the absence of steering and clear responsibility.

Risky formulas: "we are compliant" without details, "we store nothing" without architecture or contracts, "EU hosting" without specifying where prompts, logs, and tickets go.

To demand:

DPA, register of subcontractors, retention rules.

Clarification on logs, supervision, and incident management.

Many AI projects fail not on code, but on adoption: lack of training, no usage rules, no business ownership, no measurement ritual.

A nearby agency that doesn't know how to train or support change management doesn't solve the problem.

The goal is to avoid two extremes:

Choosing solely based on proximity.

Choosing solely based on tech.

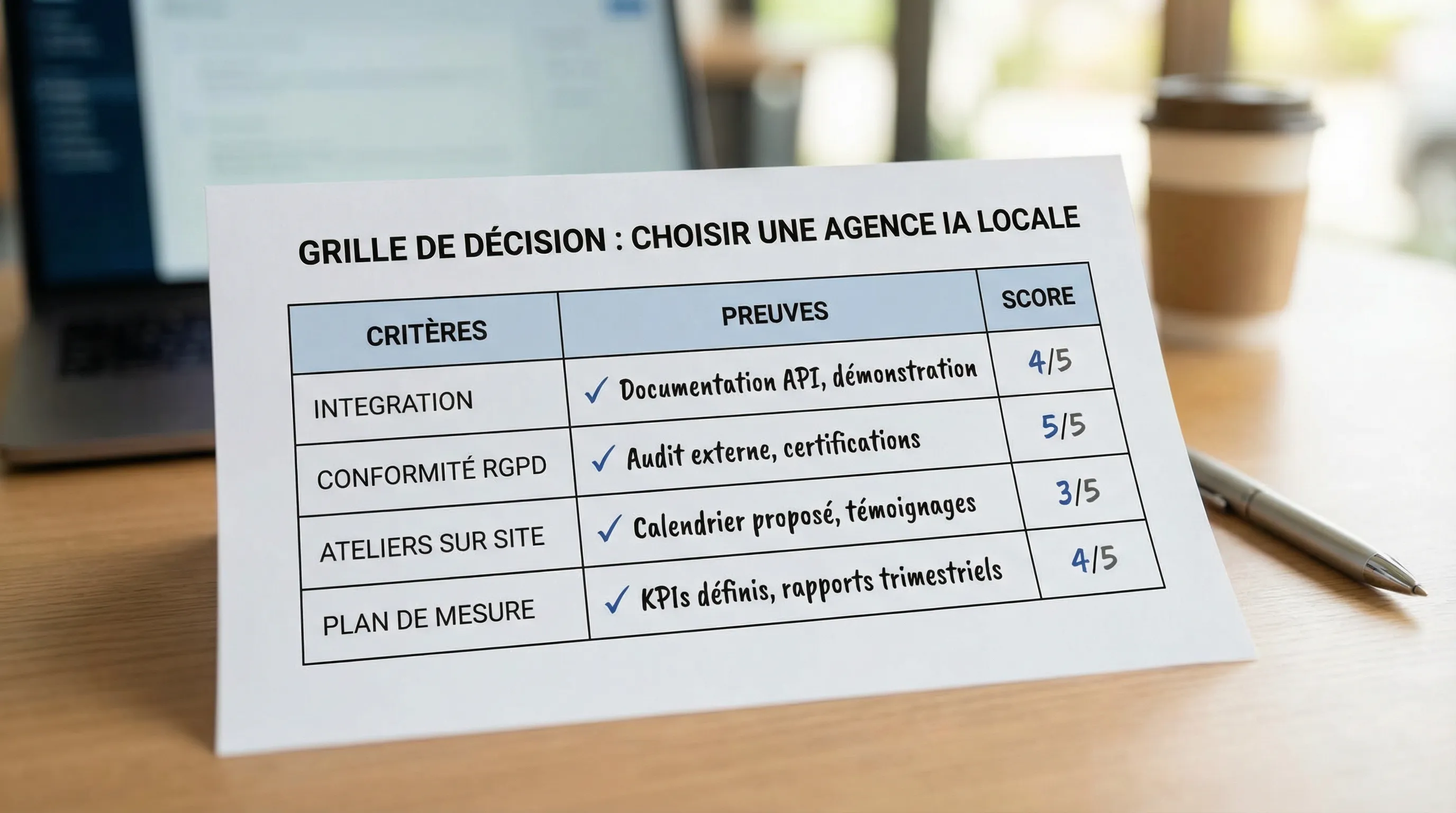

Here is a pragmatic grid, with the evidence to request.

Axis | What you evaluate | Evidence to request | Why it's critical |

|---|---|---|---|

Workshops & Scoping | Ability to understand your real processes | Example deliverables (use case registry, backlog, KPIs) | Without scoping, AI remains a demo |

IS Integration | Connections to your tools and flows | Architecture diagram, examples of similar integrations | Non-integrated AI produces little ROI |

Security & Compliance | GDPR, access, traceability | DPA, log policy, subcontractor management | Reduces legal and operational risk |

Measurement & ROI | Baseline, KPIs, test protocol | Pilot scorecard, measurement method | Avoids endless POCs |

Operations (Run) | Monitoring, costs, incidents | Runbook, responsibilities, SLA or process | A pilot without a run phase doesn't scale |

Useful Proximity | Field presence when necessary | Intervention terms, availability | Accelerates adoption and decision-making |

These questions are designed to reveal the reality of execution in less than an hour.

What do you do on-site, and at what point in the project does it bring the most value?

Who participates in workshops on the agency side, and who decides on the client side?

On a comparable project, what really required a physical presence?

Which business KPI do you choose first, and how do you measure it before building?

What does your test protocol look like (representative cases, guardrails, quality)?

How do you handle model errors (sources, refusals, handoff, supervision)?

Which data is forbidden from entry, which is possible, and how do you classify it?

Where do prompts go, where are logs stored, and for how long?

Can you describe your approach to minimization and traceability?

If we change models or providers, what remains stable?

Which bricks are specific, which are standard, and which interfaces are documented?

Even for an ambitious project, a solid agency knows how to propose a start that limits risk.

A good scenario (typical for SMB/scale-up):

Audit of opportunities and risks, then choice of 1 to 2 use cases "close to cash"

Instrumented pilot with a simple test protocol

Minimal integration into the workflow (not an isolated app)

Decision to scale based on a scorecard (value, quality, risks, costs)

If you want a structured base, Impulse Lab has already published useful resources on the subject, notably on essential criteria for choosing an AI agency and on the AI audit as a pragmatic entry point:

Here is a simple reading.

Situation | Proximity recommended? | Why |

|---|---|---|

Very field-based processes (ops, workshops with non-tech teams) | Yes, often | Faster observation and adoption |

Highly regulated project (sensitive data, internal validation) | Rather yes | Alignment, governance, evidence, short iterations |

Very technical project (integrations, architecture, LLMOps) | Not necessarily | Competence and discipline take precedence over distance |

Need for training and onboarding | Yes, useful | In-person interaction accelerates reflexes and culture |

You are mainly looking for "a reassuring provider" | Careful | Risk of choosing appearance over execution |

Without falling into a heavy tender process, aim for at least:

A clear scope (what the system does, and does not do)

3 to 5 KPIs (1 main KPI, 2 to 4 support metrics and guardrails)

An understandable architecture diagram (flows, data, integrations)

A test plan (cases, acceptance criteria, error management)

A security/GDPR clarification (DPA, subcontractors, retention)

An operations plan (who supervises, how we correct, how we control costs)

It is often at this stage that you will see if "near me" means "operational partner" or "local salesperson".

Impulse Lab is an agency that develops custom web and AI solutions and supports adoption via audits, integrations, automations, and training. If your search for "AI agency near me" comes from a need for rapid and controlled results, the most reliable approach is often:

start with an opportunity audit to prioritize 1 to 2 actionable use cases,

deliver in short cycles (Impulse Lab mentions weekly delivery and a client portal),

integrate AI into your existing tools rather than creating a silo,

measure impact and decide on scaling.

To discuss your context (local or multi-site) and see if an audit or a pilot makes sense, you can go through the site: impulselab.ai.

Our team of experts will respond promptly to understand your needs and recommend the best solution.

Got questions? We've got answers.

Leonard

Co-founder

Continue reading with these articles

Many AI projects die after a convincing demo, before real-world use. The reason is simple: **moving to production** doesn't mean plugging in a model, but **delivering a usable product** that is integrated into your tools, measured, secured, and operated over time.

A chatbot often promises one simple thing: answering quickly, at any time, without mobilizing your teams. In SMEs, the promise is appealing, but reality depends on a key point: **what the bot can exactly do**, where its answers come from, and how it hands over when it doesn't know.