AI System: Minimal architecture for reliable deployment

Moving an AI demo to production is rarely a "model" problem. It's an **AI system** problem: how AI integrates into your tools, data, security, business rules, and daily operations.

Moving an AI demo to production is rarely a "model" problem. It's an **AI system** problem: how AI integrates into your tools, data, security, business rules, and daily operations.

Moving an AI demo to production is rarely a "model" problem. It's an AI system problem: how AI integrates into your tools, your data, your security, your business rules, and your daily operations.

For an SMB or scale-up, the goal isn't to build a "heavy" platform like a large corporation. The goal is to assemble a minimal architecture that makes deployment reliable, measurable, and maintainable, right from V1.

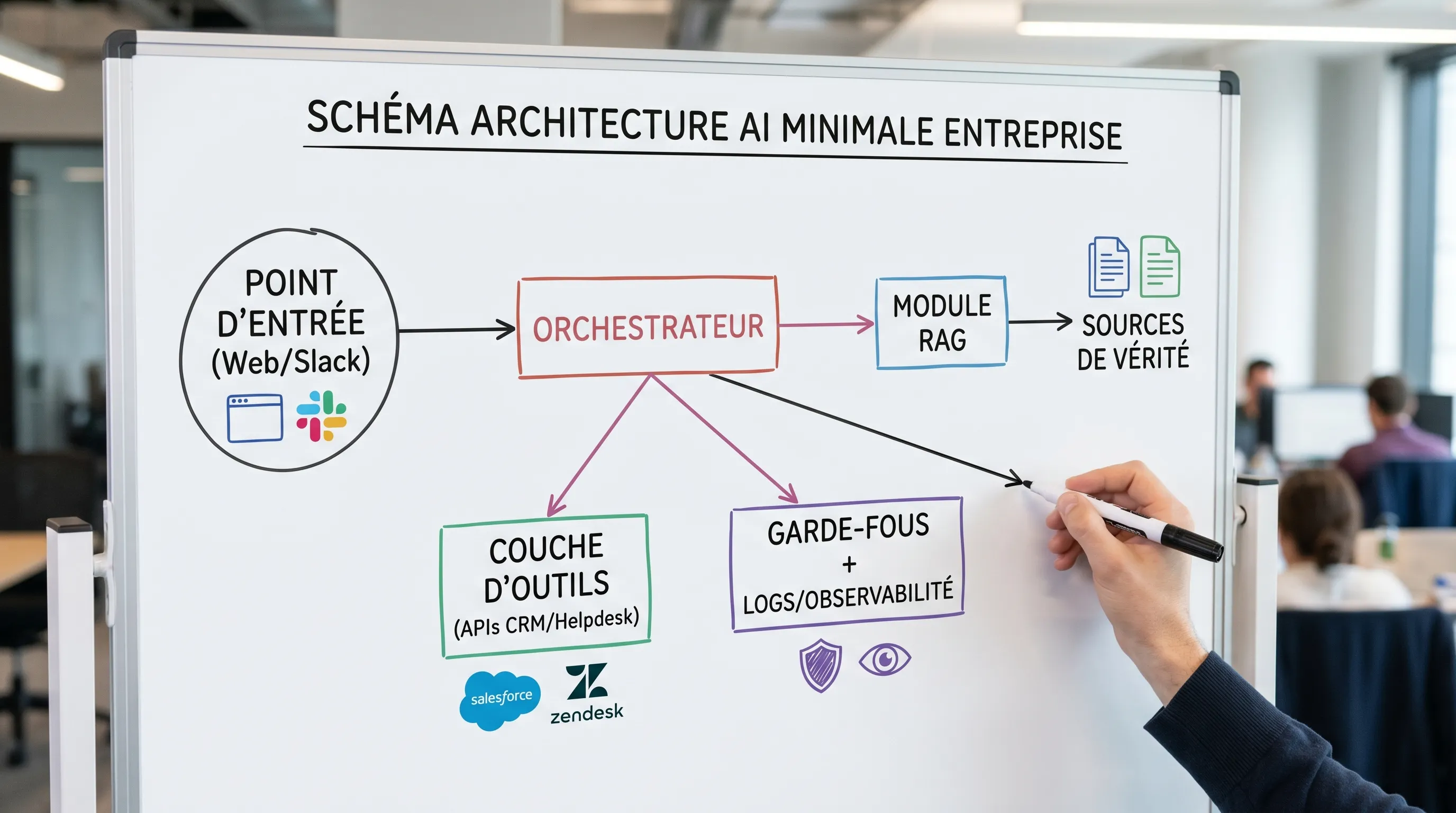

An AI system is the set of building blocks that allow a model (LLM, classifier, vision model, etc.) to produce operational value in a real workflow, with acceptable guarantees.

Practically, the model is just one block. An AI system also includes:

entry points (web app, Slack/Teams, CRM, helpdesk, internal API)

an orchestration layer (product logic and business logic)

controlled access to data (sources of truth, permissions)

guardrails (quality, security, compliance, validation)

observability (logs, metrics, costs)

a runbook (operations, incidents, updates)

If you only build "the prompt" or "the chatbot", you are building an artifact. If you build the blocks above, you are building a system.

A minimalist architecture is one that covers the dominant risks and production requirements, without multiplying components.

It serves to avoid three classic failures:

The AI doesn't integrate: it lives in a tab, not in the tools (CRM, support, back-office), so adoption drops.

Quality is unpredictable: no evaluation protocol, no verified sources, no versioning, so trust erodes.

Operations explode: uncontrolled costs, untraceable incidents, no owner, no rollback procedure.

In 2026, it is also the most pragmatic way to prepare your deployments for governance requirements (GDPR and EU AI Act).

The goal is not to impose a stack. The goal is to not forget anything essential.

Choose only one main channel to start: website widget, Slack, Teams, internal browser extension, or a dedicated page. The rule: the entry point must allow you to track usage.

From V1, log at a minimum:

the user (or a pseudonymized identifier)

the use case (intent, type of request)

the result (response provided, action taken, escalation)

the response time

Without this, you will have usage, but no management.

A useful AI quickly touches sensitive data. Your minimal architecture must integrate:

authentication (SSO if possible, otherwise managed accounts)

authorization (who has the right to see what, and do what)

"boundaries" between environments (test, pilot, production)

To properly frame this block, link it to your IAM approach and the principles described in your authentication and access management system.

The orchestrator is the part that transforms "a model" into a "product feature". It manages in particular:

context preparation (data, history, rules)

pattern selection (simple API, RAG, tooled agent)

routing (which logic depending on the intent)

output structuring (JSON, fields, citations)

This is also where you implement "reliable" rather than "magical" behaviors: rephrasing, asking for clarification, refusal, escalation.

Most assistants fail because they improvise on topics where the company already has an official answer.

A well-designed RAG (Retrieval-Augmented Generation) connects AI to your sources of truth, instead of relying on frozen knowledge. If you want a definition and principles, see the RAG entry.

In a minimal architecture, "serious" RAG includes:

selected documents (not "the whole Drive")

indexing with metadata (source, date, owner, confidentiality level)

output with citations (or at least references)

If you need to standardize the connection between models and sources/tools, the Model Context Protocol (MCP) can become a structuring block, but it is not mandatory for a V1.

Guardrails are not a "nice to have", they are the brakes of a vehicle.

In a minimal AI system, you need guardrails at three levels:

Inputs: filter or mask sensitive data, detect prompt injection (useful to know recommendations like OWASP Top 10 for LLM Applications).

Outputs: constrained format, citations, refusal rules, confidence thresholds.

Actions: explicit confirmations, preview modes, idempotency, strict permissions.

As soon as your AI can "act" (write in a CRM, create a ticket, send an email), draw inspiration from an "action-first guardrails" logic, detailed in our content on agents and their protections (for example autonomous agents: guardrails and validation).

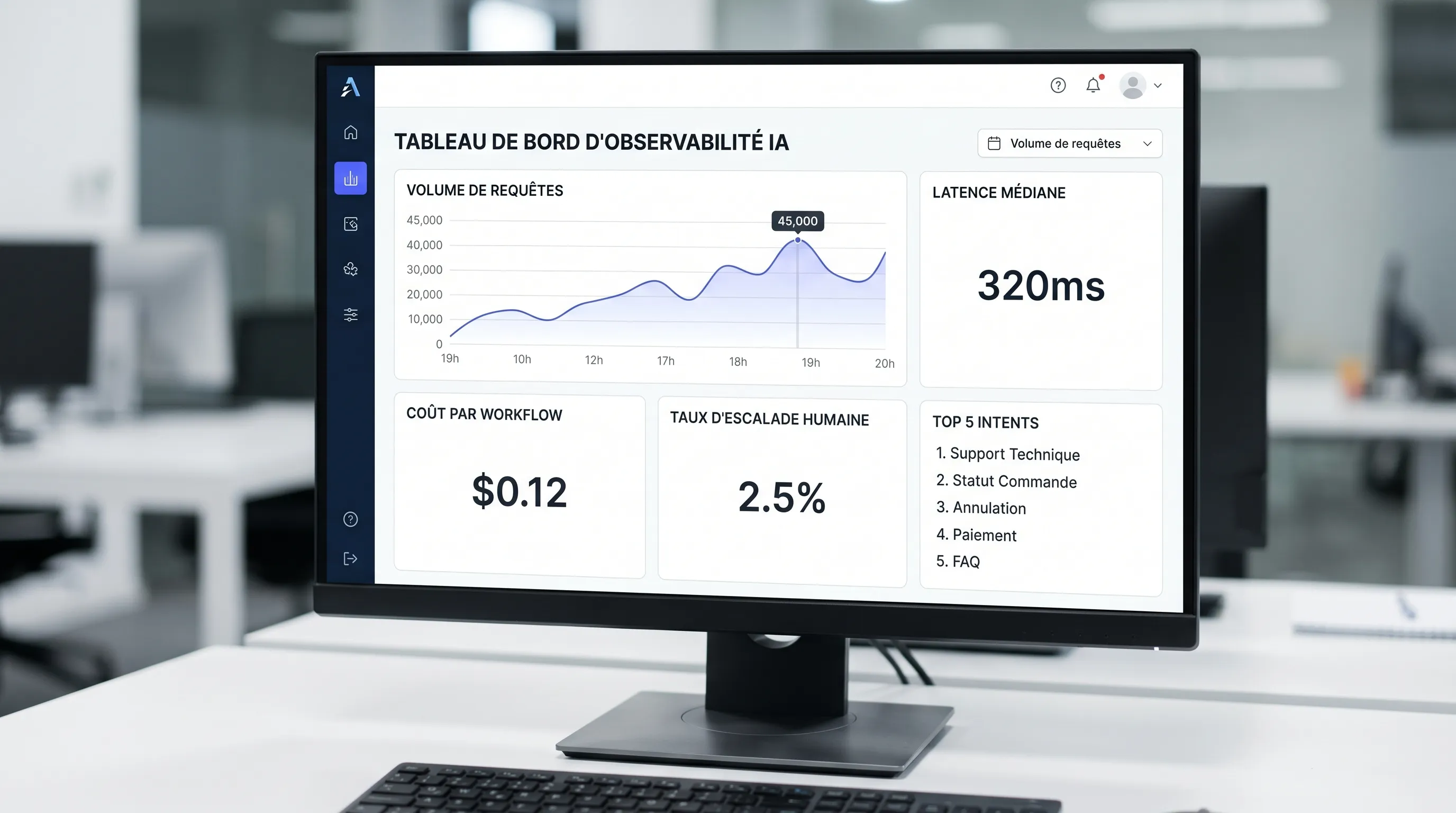

Without observability, you cannot industrialize.

Your minimum viable observability:

logs: prompt (or truncated version), retrieved context, response, tool calls, errors

metrics: latency, escalation rate, failure rate, satisfaction or quality signal

costs: cost per request, cost per workflow, top users, top intents

The goal is not to have a "super dashboard", but to be able to answer in 5 minutes:

"Why did the assistant fail?"

"How much does it cost per week, and why?"

"Which version introduced a regression?"

A reliable AI is an operable AI. In your minimal architecture, plan for:

a runbook (who does what, how to diagnose, how to rollback)

versioning (prompts, configs, indexes, rules)

degraded modes (e.g., "I don't know" response, human escalation, fallback to a deterministic flow)

A good test: if the "owner" is absent for a week, does the system continue to function without panic.

Many teams over-architect because they start directly with agents. However, the right pattern depends on the risk level and the nature of the workflow.

Need | Recommended pattern | Essential blocks | Dominant risk |

|---|---|---|---|

Help write, summarize, rephrase | API (LLM) | Orchestration, output guardrails, logs, costs | Variable quality, sensitive data |

Answer "truthfully" from an internal corpus | API + RAG | RAG with citations, doc access control, evaluation | Hallucinations, obsolete sources |

Execute multi-tool actions | Guarded agent (tool-calling) | Permissions, confirmations, logging, idempotency, runbook | Incorrect actions, security |

This table is not theoretical: it helps you deliver a V1 faster, with less risk surface.

A minimal architecture is not a dogma, it is a response to the most frequent failures observed in production.

Symptom in production | Frequent cause | Missing minimal block |

|---|---|---|

"It worked yesterday, not today" | prompt/config change without traceability | Versioning + logs |

Plausible but false answers | missing or poor quality context | Serious RAG + citations + evaluation |

AI gives too much sensitive info | vague permissions, unclassified data | IAM + minimization rules |

Drifting costs | no control per use case | Cost metrics + quotas + alerts |

Impossible to diagnose incidents | no actionable observability | Structured logs + tool-calling traces |

Low adoption | tool outside workflow | Integrated entry point + UX + instrumentation |

A "reliable" V1 does not mean perfect. It means: bounded, measured, secured, and improvable.

Define a use case with:

a target user

an existing workflow (before/after)

a KPI and a baseline

a clear rule for "when to escalate"

For a complete method of moving to production, you can also rely on our guide AI development: key steps to move to production.

Build the complete path, even if each block is simple:

entry point

orchestration

context (RAG if necessary)

guardrails

logs and metrics

The rule: no "uninstrumented" V1.

Test on real and repeated cases:

a small set of "easy" questions (where the AI must be excellent)

a set of "trick" questions (where the AI must refuse or escalate)

a set of "sensitive data" questions

For the risk management approach, the NIST AI Risk Management Framework is a useful reference, even if you apply it lightly.

Deploy to a restricted group, with a simple ritual:

weekly review of incidents and doubtful answers

adjustment of context (sources), guardrails, and UX

KPI and cost tracking

If you are a CEO, CTO, or Head of Ops, you can qualify reliability with 6 questions:

Integration: is it used in the tool where the work is done, or on the side?

Truth: on critical topics, do we have sources and citations?

Control: who can see what, and who can do what?

Measurement: do we have a business KPI, and a baseline?

Costs: do we know the cost per week, and the cost per useful action?

Operations (Run): can we diagnose, fix, and rollback in less than a day?

If two answers are "no", you probably have a prototype, not an AI system.

What is an enterprise AI system? An AI system is the combination of model + orchestration + data + security + guardrails + observability + operations, which allows delivering reliable value in a workflow.

What is the minimal architecture to deploy a reliable AI? An instrumented entry point, an orchestration layer, controlled access (IAM), a reliable context (often RAG), guardrails, observability, and a runbook.

Is a RAG always necessary in an AI system? No. If the use case is writing or rephrasing without a "truth" stake, a RAG can be useless. As soon as the assistant must answer accurately based on internal content, RAG often becomes essential.

When to switch from an assistant to an agent that executes actions? When the workflow is frequent, well-bounded, and you can secure the actions (permissions, confirmations, idempotency, logs). Otherwise, start with API or RAG.

How to avoid cost drift in production? Measure the cost per workflow, add quotas/alerts, reduce the context, use caching when relevant, and remove "non-ROI" usages.

If you want a reliable deployment without over-architecture, Impulse Lab can help you frame the right pattern (API, RAG, guarded agent), design the minimal architecture, integrate AI into your existing tools, and instrument KPIs, security, and operations.

Depending on your maturity, you can start with an AI opportunity audit (prioritization and scoping), a V1 delivered in short cycles, or adoption training to make usages reproducible. To discuss this, you can visit the Impulse Lab website and request an initial chat.

Our team of experts will respond promptly to understand your needs and recommend the best solution.

Got questions? We've got answers.

Leonard

Co-founder

Continue reading with these articles

Comparing an **AI automation agency** is hard because offers quickly look alike: audit, chatbot, agents, integrations, automations, training, ROI. Yet, behind the same words, two providers can have radically different approaches. One will sell an attractive demo...

If you're searching for "custom software price", you probably want a simple answer. Here it is: **serious custom software rarely costs less than €15,000 to €25,000 for a useful V1**, and can exceed **€100,000 to €300,000** once it involves multiple user roles, integrations...